South Africa's banking system is not approaching an AI crisis; it is already inside one, and the most dangerous aspect of it is that the instruments causing it are the same instruments that leadership believes are solving it. Artificial intelligence, deployed at scale across credit adjudication, fraud detection, customer behavioural modelling, regulatory compliance, and liquidity management, has introduced a threat surface that no current governance framework has adequately named, let alone measured. The institutional comfort derived from AI's demonstrable efficiency gains is itself the most acute liability: institutions that believe they have controlled a technology they do not fully understand have not secured a competitive advantage; they have built a sophisticated illusion of it.

What is visible to boards and regulators is the capability layer, the gains in processing speed, the reduction in manual decisioning, and the improvement in customer acquisition metrics. What is invisible, and therefore ungoverned, is the systemic risk layer: the emergent failure modes, the correlated model errors, the third-party vendor concentration, the adversarial attack surfaces, and the regulatory opacity that AI, deployed without corresponding governance rigour, introduces into systems whose stability is not merely a commercial concern but a matter of national economic integrity.

Call this Mythos AI Shock: the systemic rupture produced when institutional mythology about artificial intelligence collides, without warning and without adequate preparation, with the operational reality of what an inadequately governed and structurally under-secured AI system does to financial systems under stress. The mythology is specific, well-rehearsed, and commercially convenient. It holds that AI is a tool, subject to the same governance frameworks as any other digital asset; that AI risk is primarily a technological concern, manageable by chief technology officers and information security teams operating within existing enterprise risk structures; and that South African banks, having invested substantially in digital modernisation strategies, have therefore made corresponding and proportionate progress on AI resilience.

Each of these propositions is analytically wrong, structurally consequential, and, in certain institutions, already generating exposures that have not yet been attributed to their true cause. The mythology endures precisely because its consequences have not yet arrived with sufficient drama to force institutional reckoning at the level of urgency the facts demand. When they do, the delay will be indistinguishable from negligence, and the distinction between the two will not be available as a defence to the board.

Mythos AI Defined: The Cybersecurity Fears It Raises and The Systemic Risks It Imposes

Mythos AI refers to a class of artificial intelligence capability that autonomously discovers, prioritises, and exploits vulnerabilities across interconnected digital systems at machine speed, thereby collapsing the temporal and structural assumptions upon which existing risk and governance frameworks depend. It is not merely an acceleration of existing cyber threats; it is their reconstitution into a form of organised, automated pressure that renders traditional defensive sequencing ineffective.

The cybersecurity concern it raises is immediate and structural: vulnerabilities are no longer discovered sporadically but identified continuously, no longer exploited selectively but executed simultaneously across shared infrastructure. This creates a condition of controlled instability, where systems appear operational while being persistently mapped, tested, and penetrated beneath the surface. The risk is not confined to individual institutions; it extends across the financial system through common architectures, shared vendors, and synchronised operations, converting local weaknesses into systemic fault lines. Under these conditions, containment becomes uncertain, response windows collapse, and governance frameworks designed for human-paced threats become misaligned with machine-driven realities.

The Illusion of Control: Stability As A Concealed Instability

The most dangerous assumption in modern banking is not that systems are secure, but that security itself remains a controllable variable. Institutions project resilience while silently accumulating exposure; they defend against yesterday’s threats while accelerating towards tomorrow’s failures. The sector prides itself on regulatory compliance, yet compliance increasingly measures what has already become obsolete. This is not a paradox to be admired; it is a contradiction to be dismantled. The emergence of advanced artificial intelligence systems capable of autonomously discovering and exploiting software vulnerabilities has introduced a condition where defence lags permanently behind offence. The result is a controlled disorder, a structured fragility masked as operational stability. South African banks, like their global counterparts, operate within tightly coupled technological ecosystems, which simultaneously enhance efficiency and amplify systemic exposure. What appears as interconnected strength becomes synchronised vulnerability under machine-speed adversarial conditions. The question is no longer whether breaches will occur; the question is whether institutions can withstand the velocity and simultaneity of those breaches.

The Mythos AI Threat Deconstructed: How Institutions Misnamed the Threat and Missed the Risk

The first error is definitional, and it precedes every subsequent one. Financial institutions have classified artificial intelligence as a capability risk: they ask whether AI will work effectively enough to deliver the returns projected in their business cases, and they have not, with anything approaching systemic rigour, classified it as a systemic risk, meaning they have not asked what happens when AI works exactly as designed, but within an environment the designers did not model. What transpires when malicious actors harness it for illicit activities? These are not the same questions (capacity risk question versus system risk question), and the failure to recognise their difference is not a minor analytical imprecision; it is a foundational governance failure with compounding consequences.

The capability risk question has been answered, imperfectly but repeatedly, by technology vendors whose incentive structures reward deployment over governance, by management consultants who are engaged to accelerate AI adoption rather than to audit its risk profile, and by pilot programmes that measure performance over short time horizons that do not capture tail-risk dynamics. The systemic risk question has barely been posed.

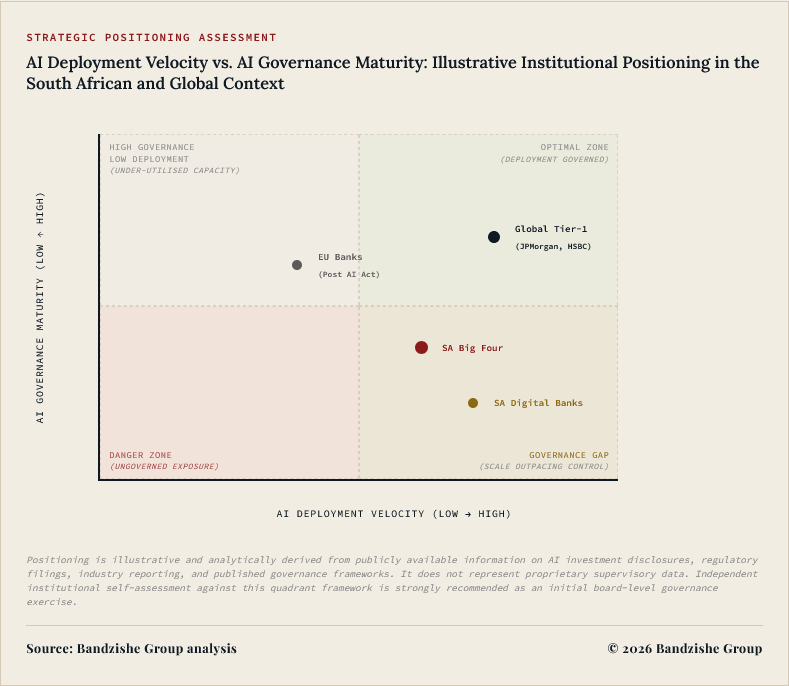

In South Africa's financial sector, the conditions that make the answer to that question consequential are more pronounced than in almost any peer economy: high institutional concentration, legacy infrastructure fragility, a macroeconomic environment of sustained stress, and a regulatory framework that has not yet developed the specialised instruments AI governance requires. The disparity between AI deployment velocity and AI governance maturity is not a management oversight; it is a structural fault line, and fault lines do not remain dormant indefinitely.

The institutional mythology about AI in banking rests upon three principal tenets, each internally coherent, each empirically false, and each rendered more dangerous by the presence of the other two. The first tenet is that AI risk is bounded and bilateral: it either works or it does not, and if it fails, it fails in ways that are observable, containable, and recoverable through established incident management procedures. The second tenet is that AI governance is an internal matter, controllable through policy, procurement standards, model validation procedures, and technical oversight exercised within the institution's own four walls. The third tenet is that regulatory compliance constitutes risk management, and that if an institution can demonstrate compliance with relevant data protection legislation, financial technology guidance notes, and operational risk standards, it has discharged its governance obligations with respect to AI.

The first tenet fails because AI risk is not bilateral; it is emergent, non-linear, and in certain configurations, self-reinforcing in ways that bounded risk models cannot capture and observable incident management cannot prevent. The second tenet fails because AI governance is not an internal matter; it is a supply-chain problem, an ecosystem problem, and, in the South African context, a concentrated infrastructure problem of the first order. The third tenet fails for a reason that South Africa has already been forced to learn in a different regulatory context, at a cost that should have been sufficient to institutionalise the lesson: regulatory compliance is a floor, not a ceiling, and a floor that does not address the actual risk is not a safety feature; it is a false assurance. Taken together, these three false tenets produce an institution that is deploying AI rapidly, governing it minimally, and measuring its governance success against criteria that bear no meaningful relationship to the risks it has actually introduced.

Is it possible that South Africa's financial institutions genuinely do not see the threat surface they are already operating inside? The answer, on the evidence available, is that partial visibility has been mistaken for comprehensive understanding. Boards and executive committees are presented with AI risk dashboards that measure the risks governance frameworks were designed to detect; they are not presented with the risks that governance frameworks have not yet been designed to address. This distinction is not academic: the risks that existing frameworks detect are the risks of yesterday's AI deployment; the risks that existing frameworks cannot detect are the risks of the AI deployment that is happening today, at scale, across credit, liquidity, identity, and compliance systems, in an environment whose adversarial sophistication is advancing faster than any institutional defence that relies on yesterday's detection instruments.

The comfortable institution is not the well-governed institution; the comfortable institution is the one whose board has been given insufficient reason to be uncomfortable, and in the context of AI governance in South African banking, insufficiency of reason has been produced not by the absence of risk but by the absence of instruments capable of making the risk visible.

The Threat Surface Mapped: Seven Vectors Without Names in Any Current Policy Framework

The unseen threat surface is not a metaphor for complexity; it is a precise operational description of a condition in which risk exists, compounds, and propagates through systems whose operators cannot see it clearly enough to govern it, because the instruments of governance were designed to detect the risks that have already been named, not the risks that have not yet been. Within South Africa's financial sector, the threat surface comprises at least seven distinct vectors, none of which is fully addressed by any current governance framework, and several of which are actively expanding as AI deployment accelerates without commensurate investment in governance rigour. The failure to name these vectors is not the ignorance that precedes knowledge; it is the selective blindness of institutions and regulatory bodies that have found the mythology of AI capability more commercially and politically convenient than the discipline of AI risk governance. To name them, with operational specificity and without institutional protection of the kind that comfortable ignorance provides, is the first act of governance. What follows is not a taxonomy for academic satisfaction; it is an inventory of active, present-tense institutional exposure.

Of these seven vectors, the second, the deepfake-mediated compromise of know-your-customer procedures, demands immediate operational prioritisation because it is both the most immediately exploitable and the least visibly governed within South Africa's current regulatory and institutional framework. South Africa's retail banking sector has aggressively digitised its customer onboarding and identity verification processes over the past decade, driven by the twin imperatives of financial inclusion and competitive efficiency, and producing genuine gains on both counts.

Capitec Bank, which by customer account numbers had become South Africa's largest retail bank, built its competitive position substantially upon the accessibility and speed of its digital onboarding and transactional model, demonstrating that digitised banking at scale is both operationally viable and commercially compelling in the South African market. TymeBank, operating without a physical branch network, proved that a fully digital retail banking model can achieve meaningful market penetration at a cost structure that traditional branch-based models cannot match. Discovery Bank's integration of vitality-linked behavioural data into product design represents a genuine conceptual innovation that has attracted international attention as a model for data-driven financial services.

These achievements deserve unqualified recognition; they represent real institutional innovation in a market that has historically underserved significant portions of the population. They are also, by virtue of their digital-verification dependencies, each exposed to the synthetic identity attack surface in ways that urgently require adversarially-robust governance investment that, to the extent visible in current public regulatory discourse, has not yet been provided at a level commensurate with the operational exposure they represent.

South Africa's Compounded Vulnerability: The Structural Conditions Already Set for Systemic Shock

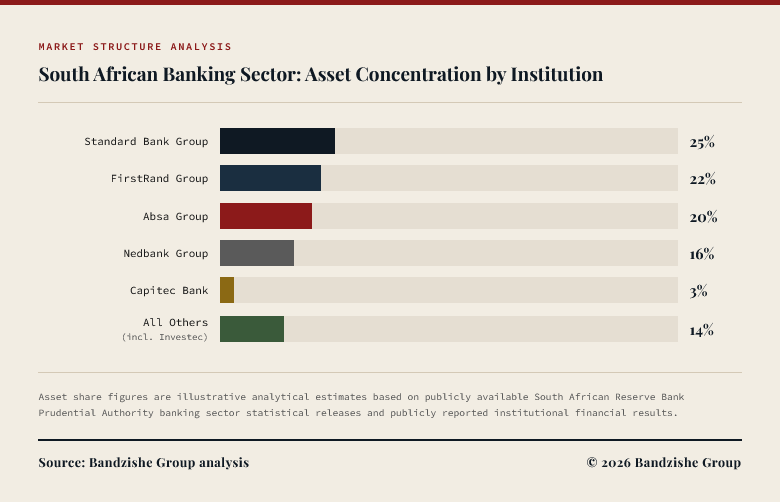

South Africa enters this threat environment carrying pre-existing structural vulnerabilities that a determined adversary, or an undirected systemic shock, will exploit with a dispassion that no board resolution will interrupt. The country's banking sector is simultaneously highly sophisticated and highly concentrated, a combination that produces a system capable of absorbing routine stress with genuine competence but acutely susceptible to correlated, novel disruptions for which its risk frameworks were not originally designed. The five institutions that dominate South Africa's banking landscape, Standard Bank, FirstRand operating through First National Bank, Absa Group, Nedbank, and Capitec, collectively control the overwhelming majority of the sector's assets, retail deposits, and payment infrastructure. Concentration is not inherently pathological; it can produce regulatory clarity, capital efficiency, supervisory coherence, and the scale economies that support real economy credit needs at a cost that fragmented banking systems cannot achieve.

The pathology emerges precisely when institutional concentration intersects with a novel, inadequately governed risk category, because the failure modes of one institution's AI systems, or of a shared AI vendor's platform serving multiple institutions simultaneously, propagate across a concentrated sector without triggering any established early warning mechanism designed to detect that specific propagation pathway. Homogeneity of institutional response, which high concentration tends to produce through shared regulatory exposure, shared vendor relationships, and shared macroeconomic conditioning, becomes a force multiplier for correlated error rather than a foundation for collective resilience.

South Africa's physical infrastructure creates a compounding condition that has no meaningful analogue in the major economies whose AI governance frameworks the country is, selectively and incompletely, attempting to adapt. The Eskom load-shedding crisis, which in 2023 reached Stage 6 and subjected the country to scheduled power interruptions across extended periods, exposed the dependence of financial infrastructure on continuous power supply in ways that the sector's risk management frameworks had not adequately stress-tested at the scale of AI system deployment. Data centres, payment processing systems, branch networks, and mobile banking infrastructure each experienced operational disruption, but the implications for AI systems, which require continuous computational resources, uninterrupted network connectivity, and data pipeline integrity to operate reliably and safely, were insufficiently analysed and even less adequately remediated in the context of AI governance specifically.

An AI credit decisioning system that produces corrupted outputs due to a power interruption mid-computation is not an exotic scenario in South Africa; it is a plausible routine operational condition that belongs in every AI system's deployment risk register. An AI fraud detection system that loses real-time data access during scheduled load-shedding creates windows of vulnerability that, during the periods of South Africa's most acute power stress, were measured not in minutes but in hours. The intersection of AI operational dependency and power infrastructure intermittency constitutes a threat vector with no meaningful precedent in the Basel Committee's published operational risk standards, in the Financial Stability Board's AI governance recommendations, or in the National Institute of Standards and Technology's AI Risk Management Framework, all of which were produced in institutional contexts where continuous power supply is a fixed operational assumption rather than a managed variable.

South African institutions must develop indigenous risk parameters for AI system operation under intermittent infrastructure conditions, because no global standard currently provides them, and the absence of a standard is not the absence of the risk.

South Africa's Financial Action Task Force (FATF) grey-listing, imposed in February 2023 and officially resolved in October 2025 following a 32-month multidisciplinary remediation effort, exposed deep-seated institutional weaknesses—largely a legacy of the state capture era.

These systemic gaps in oversight and enforcement underscore a broader

pattern of institutional fragility, the implications of which for the

emerging demands of AI governance and automated financial monitoring are only beginning to be addressed with the necessary urgency. The grey-listing was not primarily a failure of written regulation: South Africa's anti-money-laundering and counter-financing-of-terrorism legislative framework was, in many formal respects, comprehensive and internationally consistent. The failure was operational and institutional: the capacity to detect, investigate, and prosecute financial crime did not match the sophistication of the threats the framework was designed to address, and the gap between nominal regulatory compliance and actual operational capability had been allowed to widen without adequate institutional attention to the consequences of that widening. AI governance in South Africa is at substantial and demonstrable risk of reproducing this precise pattern.

The country possesses general data protection legislation in the Protection of Personal Information Act, and the South African Reserve Bank has issued guidance notes on financial technology, digital innovation, and cyber resilience. The Financial Sector Conduct Authority has engaged with technology-mediated conduct risks in consumer financial services. But what is conspicuously absent, and its absence is conspicuous precisely because the deployment footprint it should govern is already substantial, is a comprehensive, operationally enforceable AI risk governance framework for the financial sector, one that addresses the systemic risk vectors documented in this analysis with the specificity and operational authority that those vectors require.

The FATF lesson is direct and unsparing: compliance with frameworks that do not adequately address the actual risk is not a governance achievement; it is a deferral of accountability, and deferral of accountability accumulates into the kind of institutional failure that requires emergency remediation at a cost that proactive governance would have rendered unnecessary.

The Legacy Infrastructure Trap: When Old Pipes Meet New Poisons in a New AI Threat Environment

There is a structural irony embedded in the digital modernisation strategies of South Africa's major banks that deserves naming with directness rather than diplomatic circumlocution: the AI systems being deployed with considerable institutional and commercial fanfare sit, in several cases, atop core banking infrastructure that carries the technical debt of multiple decades. Core banking systems, the transactional engines that process deposits, loans, payments, and account management at the foundational layer of every financial institution, continue in some cases to operate on platforms and in computing environments whose original design predates not merely the current generation of machine learning but the modern internet itself. This is not a South African peculiarity; it is a global banking sector condition, documented in risk analyses from the Bank of England's operational resilience framework to the Reserve Bank of Australia's technology risk supervisory guidance, and it represents one of the most consequential unresolved challenges in institutional finance across every major market.

However, in South Africa, the gap between the sophistication of the AI systems being deployed and the resilience of the infrastructure upon which those systems depend is rendered particularly consequential by the operating environment: intermittent power supply, network instability, an expanding adversarial attack surface, and macroeconomic volatility that pushes AI models towards the edges of their training distributions with greater frequency than the infrastructure was designed to handle. The illusion that a sophisticated AI layer deployed above an aged core banking system renders that system resilient is not merely technically incorrect; it is precisely the kind of category error that produces institutional failures whose causes are not immediately identifiable and whose costs are therefore not immediately containable.

Application programming interfaces, the integration layers that allow AI systems to communicate with core banking infrastructure and with the expanding ecosystem of third-party services, financial data providers, cloud platforms, and regulatory reporting systems, create exposure points that neither the AI vendor, the core banking vendor, nor the institution's internal governance framework fully governs in an integrated and adversarially-conscious manner. Every API integration represents a potential vector for adversarial inputs designed to manipulate the AI system connected through it; every API call represents a potential source of sensitive data exfiltration if the interface is inadequately secured, inadequately monitored, or inadequately protected against the injection attacks that adversarial AI now makes operationally feasible at commercial scale. South African banks have invested real resources in API security, and the sector's engagement with open banking frameworks and real-time payment infrastructure has produced genuine regulatory and technical progress that deserves acknowledgment.

But the combination of legacy core systems, rapidly expanding AI integration, a proliferating third-party vendor ecosystem operating across multiple regulatory jurisdictions, and an external threat environment in which adversarial AI is commercially accessible at negligible cost to any actor with intent, creates a risk profile for which existing API security investments were simply not calibrated. The Bangladesh Bank incident of February 2016 demonstrated precisely this dynamic in a different but structurally identical context: the vulnerability was not in the primary messaging infrastructure but in the integration layer between the bank's internal systems and that infrastructure, a layer that was inadequately secured and monitored. South African banks whose AI systems operate through analogous integration layers have not, in general, subjected those layers to the adversarial scrutiny that the Bangladesh Bank's experience demonstrably mandates, and the reasonable explanation for this omission, that the Bangladesh Bank incident has been taxonomised as a cybersecurity event rather than an AI governance event, is precisely the definitional error this analysis identifies at its outset.

The gap between digital front-end sophistication and core system resilience is not a failure of institutional ambition; it is a failure of sequencing, and sequencing failures are both more common in institutional life and more correctable than ambition failures, provided the correction is undertaken before the sequence failure becomes a failure of a more consequential kind. South Africa's major banks have, with genuine success, built consumer-facing digital channels that represent real quality and real innovation, channels that have demonstrably improved financial inclusion, reduced transaction costs, and placed South Africa's retail banking sector among the more digitally capable in the developing world. Capitec Bank's radical simplicity model and extraordinary retail penetration demonstrated that South African consumers will engage with digital banking at scale when the offering is designed for their actual circumstances rather than for the circumstances of consumers in the economies where the original digital banking models were developed. TymeBank's fully branchless model proved that cost economics and digital accessibility can be aligned in the South African mass-market retail segment without the branch network infrastructure that traditional retail banking assumed to be indispensable. Discovery Bank's vitality-linked behavioural integration represents a conceptual innovation sufficiently distinctive to attract genuine international academic and practitioner interest. These are achievements that merit recognition without qualification.

However, the quality of a consumer-facing digital channel is not an index of the resilience of the infrastructure that underlies it, and the AI systems operating within those channels are not evaluated, in general, against the systemic criteria that their operational importance demands from a risk governance perspective. The board that approves a digital modernisation investment on the basis of its customer acquisition and revenue potential, without demanding a corresponding and proportionate AI governance investment, is making a strategic choice even if it does not recognise it as one: it is accepting the commercial opportunity whilst deferring the systemic risk cost, and deferred systemic risk costs do not remain deferred.

The Regulatory Void: Governing What Existing Policy Frameworks Have Not Yet Named

Every governance framework is, by structural necessity, retrospective. It encodes institutional understanding of risks that have already occurred, in forms that have already been observed, through mechanisms that have already been studied with sufficient rigour to produce consensus about appropriate regulatory response. This structural limitation is not a failure of regulatory intelligence; it is an inherent characteristic of governance design, well understood in regulatory theory and, in most operating contexts, manageable through regulatory agility, supervisory capacity, and proactive industry engagement that allows regulators to stay within an acceptable proximity to the risks they govern. What distinguishes AI risk from the risks that existing financial governance frameworks were designed to address is not merely its novelty; it is three compounding characteristics that make the standard regulatory lag genuinely dangerous in this context.

The first is the speed at which AI risk compounds: unlike credit risk or market risk, which accumulate over time horizons that allow supervisory detection and intervention, AI model drift, adversarial exploitation, and algorithmic herding can produce systemic consequences within time windows measured in minutes rather than months. The second is the opacity with which AI risk operates: regulators cannot audit the decision logic of a complex machine learning model through standard examination procedures that were designed to assess human decisions documented in auditable records. The third is the systemic scale of the gap between AI deployment velocity and governance velocity: the deployment of AI systems in financial services is proceeding faster than regulatory development can match using conventional instruments, in every major financial jurisdiction globally, not merely in South Africa. The consequence is not merely a regulatory lag; it is a governance vacuum into which risk accumulates without the benefit of any institutional authority empowered to measure, name, and contain it with the precision that its systemic implications require.

The South African Reserve Bank has demonstrated genuine institutional intelligence in its approach to financial technology innovation, and intellectual honesty demands that this be acknowledged clearly and without reservation. Its regulatory sandbox, the Intergovernmental Fintech Working Group, and its active engagement with the Bank for International Settlements' Innovation Hub in Basel reflect a central bank that is intellectually alert, institutionally engaged, and genuinely serious about the dynamics of the sector it governs. The Financial Sector Conduct Authority has similarly engaged with technology-mediated conduct risks in consumer financial services, producing guidance on fair treatment of customers in digitised service environments that reflects genuine regulatory effort.

However, But the existing regulatory apparatus was designed to govern financial risk, conduct risk, and systemic risk as traditionally conceived: risks that arise through human decisions, institutional behaviours, and market mechanisms that regulators can observe, measure, and address through established supervisory instruments, including examination, reporting requirements, capital rules, and conduct standards. It was not designed to govern AI systems that produce financial risk, conduct risk, and systemic risk through mechanisms that existing supervisory instruments cannot fully observe, and whose internal decision logic cannot be audited through the examination procedures that regulatory capacity currently supports. The gap between what the regulatory framework was designed to govern and what it is now required to govern is not a failure of regulatory ambition in South Africa or elsewhere; it is a structural mismatch between the speed of technological deployment and the pace of institutional adaptation, a mismatch that is growing faster than it is being closed.

The Financial Stability Board's 2022 report on the financial stability implications of artificial intelligence made a series of recommendations addressed to financial regulators globally, including enhanced monitoring of AI model concentration risk across the sector, development of AI-specific supervisory capacity and examination competence within regulatory bodies, requirements for model interpretability and explainability in systemically significant financial applications, and international co-ordination on AI governance standards for institutions of systemic importance. South Africa's regulatory evolution since that report, whilst not without genuine progress in specific areas, has not produced a comprehensive AI risk governance framework commensurate with the sector's actual AI deployment footprint or with the systemic risk implications of that deployment in the specific structural conditions of South Africa's financial system.

The European Union's AI Act, which entered into force in August 2024, classifies specific AI applications in credit scoring, risk assessment, and financial services as high-risk systems subject to rigorous pre-deployment conformity assessment requirements, documentation obligations, and ongoing monitoring mandates, establishing a regulatory precedent whose substantive requirements South African institutions with European operations, partnerships, or technology vendor relationships must already navigate. South African institutions operating exclusively domestically face no equivalent requirement, and the absence of a domestic equivalent is not a regulatory convenience for those institutions; it is a governance gap that leaves systemic risk accumulating in a space that no regulatory authority is currently equipped to measure or contain.

Four Instructive Shocks: The Warnings That History Has Already Delivered in Expensive Ink

History's most instructive characteristic is that it charges its tuition in real time, in the currency of institutional failure, regardless of whether the lesson's designated recipient was paying adequate attention. Four events in recent financial history carry instructional weight for South African institutions that have not yet read them with the forensic rigour that their eventual cost demands, and that have, in each case, available lessons whose non-application constitutes a preventable institutional choice rather than an excusable analytical blind spot.

The specific lesson of Silicon Valley Bank's collapse for South African institutions has not received the analytical attention it demands, and the omission is consequential rather than merely academic. The failure mechanism at SVB was not exotic: interest-rate risk concentrated in a held-to-maturity portfolio, combined with a depositor base that was both geographically concentrated and connected through shared digital communication channels, created the structural pre-conditions for rapid liquidity stress that a well-calibrated risk management function should have identified, modelled, and addressed before the stress arrived. What was structurally unprecedented was the velocity at which the bank run that followed developed, compressed into a timeline that outpaced the crisis response capacity of every governance mechanism SVB had designed.

South African banks, whose retail depositor bases include substantial proportions of customers connected through WhatsApp groups, Twitter, Facebook, and the community information networks that have become primary channels for the rapid circulation of financial information in South African society, face the same AI-mediated information velocity risk as SVB in an environment whose social network dynamics amplify information, accurate or otherwise, with a speed that no human crisis response function can reliably outpace. The institution that has not modelled this risk with scenario specificity, calibrated to South African social media penetration rates and community information sharing dynamics, has not completed its liquidity risk management function; it has completed the version of that function that was adequate for 2015, in an environment that has changed fundamentally since then.

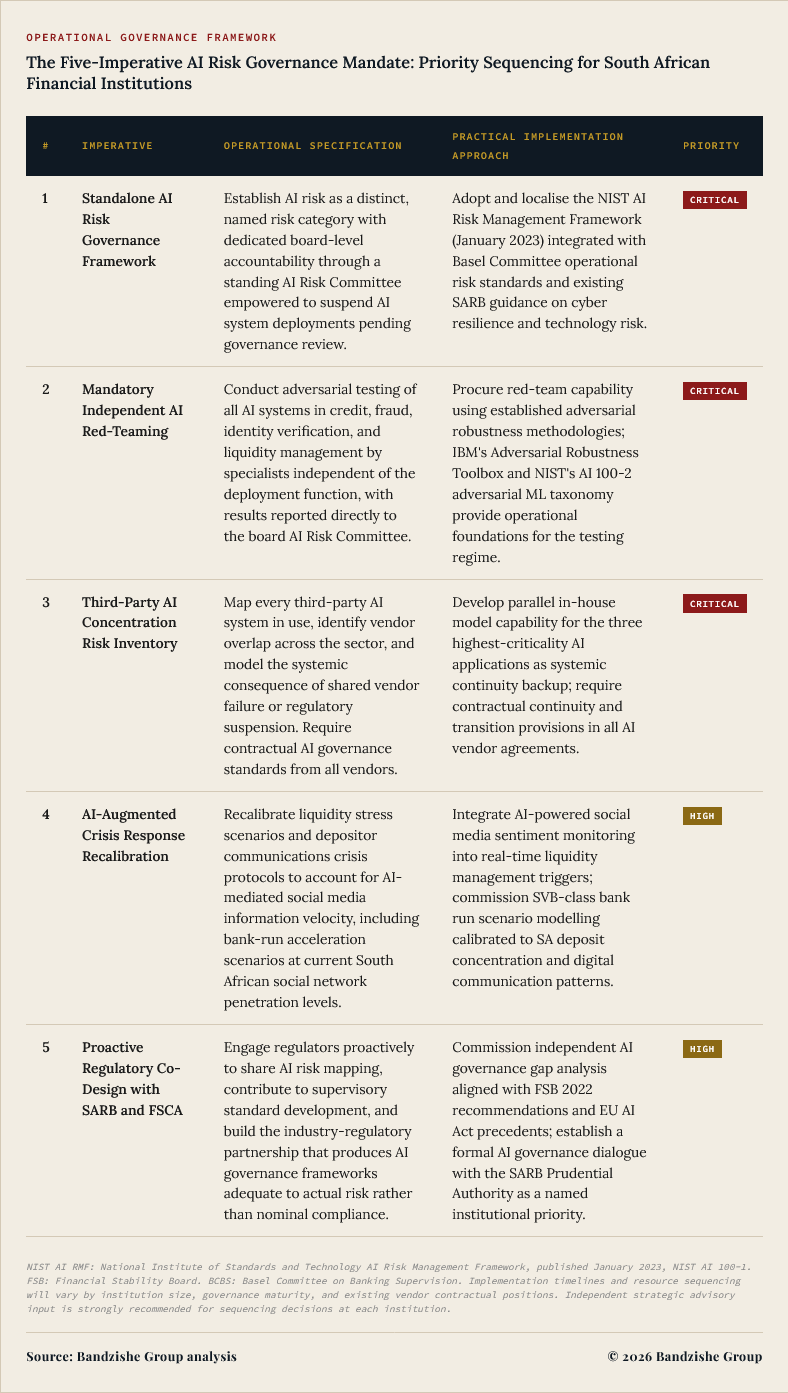

The Implementation Mandate: What Decisive Institutions Do First and Why Sequence Is Strategy

The gap between insight and action is where institutions either distinguish themselves through decisive, sequenced governance investment or surrender to the slow accumulation of inadequately named risk that will eventually demand a reckoning in conditions less favourable than the present. The imperatives that follow are not aspirational gestures directed at a distant future state; they are operational requirements grounded in what the most resilient financial institutions globally are already executing, and what South African institutions have the organisational capacity, if not yet the consistent institutional will, to match with equivalent rigour. What distinguishes the institutions that will be studied as exemplars of AI governance from those that will be studied as cautionary cases is not primarily intellectual capacity, technical expertise, or access to resources: South Africa's major financial institutions possess all three in sufficient measure. What distinguishes them is whether the board's recognition of AI governance as a strategic imperative precedes the event that makes its urgency undeniable, or whether that recognition arrives, at greater cost and under greater constraint, in the aftermath of an event that preventative governance would have rendered unnecessary.

The question before every chief executive, every board member, and every chief risk officer in South Africa's financial sector is not whether these imperatives are justified; the preceding analysis makes that case without remainder. The question is whether the institution will discharge them before the shock or explain itself after it.

The first imperative demands elaboration that directly addresses the institutional objections it most commonly encounters, because those objections, whilst not made in bad faith, reflect precisely the definitional error this analysis identified at its outset. Boards and executive committees routinely resist the establishment of a standalone AI risk governance framework on the grounds that existing technology risk frameworks are sufficiently adaptable to capture AI-specific risks, and that enterprise risk management structures that have served their institutions through multiple stress cycles have demonstrated the flexibility to absorb new risk categories without requiring dedicated governance instruments. This objection is not without institutional merit in contexts where the new risk category exhibits failure modes, time horizons, and propagation pathways broadly analogous to the categories the existing framework was designed to govern. AI risk does not meet that condition. Existing technology risk frameworks were designed to govern systems whose failure modes are observable within the time horizons of human monitoring, whose decision logic is auditable through code review and process documentation, and whose operational boundaries are defined by human-written specifications that human reviewers can inspect and validate.

Machine learning models governing credit decisions, fraud detection, and liquidity management at scale are none of these things in any operationally meaningful sense: their failure modes can operate at speeds that outpace human monitoring, their decision logic is not auditable through code review because the logic is encoded in billions of weighted parameters rather than in human-readable specifications, and their operational boundaries shift as training data distributions diverge from operational data distributions in ways that no specification document captures. The governance framework adequate to a core banking system upgrade is not adequate to an AI credit model whose training data is accumulating distributional drift in a macroeconomic environment characterised by the kind of structural stress that South Africa has sustained over the past five years. These are not comparable governance problems, and treating them as comparable produces governance that is formally compliant and substantively inadequate.

The third imperative, the third-party AI concentration risk inventory, addresses what is arguably the most structurally consequential and most consistently underprioritised dimension of South Africa's banking sector AI risk profile. Consider a concrete and operationally specific scenario: a major global AI vendor, supplying credit risk models, fraud detection systems, or identity verification platforms to three of South Africa's five largest banks, is subject to regulatory suspension in its primary jurisdiction following a non-compliance finding under the EU AI Act, or experiences a catastrophic adversarial model compromise that corrupts the outputs of its deployed systems simultaneously across all client institutions. In this scenario, three of South Africa's five largest institutions face simultaneous, correlated failure in AI systems critical to their credit, fraud, and identity functions. The systemic consequences of this scenario, in a sector whose concentration profile we have documented above, would be severe and would arrive faster than any current crisis management protocol is designed to address, because those protocols were not designed against the specific failure mechanism of shared third-party AI vendor compromise.

What makes this scenario analytically honest rather than alarmist is not that it is certain to occur; it is that the structural pre-conditions for it already exist in the current operating environment, in every South African financial institution that has not yet completed a comprehensive third-party AI concentration risk inventory and modelled its systemic consequences. The risk is present. The question is only whether it is visible.

The Reckoning: Design the Governance or Inherit Its Absence at a Price That Cannot Be Negotiated

Every crisis in financial history arrived carrying a warning that was legible, in retrospect, to anyone with the analytical instruments to read it. Every warning arrived in a vocabulary that the institutions it addressed had not yet learnt to read, through mechanisms they had not yet learnt to monitor, at a speed for which their defences had not yet been calibrated. The South Sea Bubble was visible to those who understood the difference between capitalised expectation and capitalised cash flows. The 2008 financial crisis was visible to those who understood the mathematical impossibility of the correlation assumptions embedded in collateralised debt obligation pricing models. Knight Capital's catastrophic 2012 loss was visible to anyone who asked what happened when an algorithmic system with no effective kill switch was deployed without adequate pre-launch validation. Bangladesh Bank's vulnerability was visible to anyone who audited the security of third-party integration layers rather than assuming that the security of the primary network extended to the interfaces connecting to it. SVB's bank-run velocity risk was visible to anyone who modelled the speed of coordinated digital communication against the speed of crisis response protocols designed for a pre-digital depositor communication environment.

Mythos AI Shock will not be different in its legibility; it is legible now, to those willing to read it, in the seven vectors mapped in this analysis. It will not be different in its indifference to institutional readiness; it will arrive on its own schedule, not on the schedule of the governance investments it should have prompted. What it may be, if South African institutions act with the urgency and specificity this analysis demands, is different in its consequences, because the institutions that govern AI risk with rigour before the shock become the benchmark, and the institutions that govern it after become the case study.

What is required is not incremental adjustment to existing risk frameworks; it is the categorical recognition that AI risk is a systemic risk category distinct from those that existing frameworks were designed to govern, requiring dedicated governance instruments, dedicated supervisory capacity, and dedicated board-level accountability that cannot be discharged through delegation to a technology committee or resolved through a compliance checklist. South Africa's financial institutions have demonstrated, repeatedly and under conditions of genuine difficulty, that they possess the institutional resilience, the organisational sophistication, and the strategic seriousness to meet challenges that would fracture systems with less structural integrity. The sector's navigation of the 2007 to 2009 global financial crisis, during which South African banks did not require state capital injections and maintained their operational and regulatory credibility through a period of extraordinary global systemic stress, demonstrated a conservatism of capital management and quality of risk discipline that was genuinely rare among international peers. The sector's response to the FATF grey-listing of February 2023, whilst its initiation reflected governance failures that should not have been allowed to accumulate over the preceding years, demonstrated the institutional capacity for credible and rapid remediation when the consequences of inaction became sufficiently visible to compel action at the level of urgency the facts demanded. Both examples confirm the same conclusion: the capacity to govern this risk category with the rigour and specificity it demands is not in question. What remains in question is whether that capacity will be deployed in advance of the shock, in conditions that allow rational, sequenced, and cost-efficient governance investment, or deployed in its aftermath, at greater cost, under greater constraint, and with less strategic control over its own narrative.

The institutions that will be studied in five years as models of AI risk governance in emerging market financial systems will not be distinguished by their AI technology investments or their digital innovation achievements, both of which may be impressive and may deserve recognition. They will be distinguished by the quality of the governance they placed around those investments: the rigour of their threat surface assessments, the independence of their red-teaming programmes, the specificity of their third-party concentration risk analyses, the calibration of their crisis response protocols to the AI-mediated information environment in which a future liquidity stress will occur, and the quality of their proactive engagement with regulators to build a supervisory framework adequate to the actual risk rather than a framework adequate to the vocabulary of risk that was available when the framework was first designed. These are not abstract institutional virtues; they are specific, operational, actionable commitments that can be made, resourced, and executed in the current period, before the shock that will make their necessity undeniable arrives. The choice is available now. The conditions for rational governance investment exist now. The evidence base for that investment is documented in this analysis and in the three case precedents whose lessons South Africa's institutions have not yet fully absorbed. The question is not whether you understand the analysis. The question is what you will do about it before the analysis becomes redundant because the event it described has already occurred.

The Challenge to Leadership: Govern the Risk Before the Risk Governs You

This analysis is not a warning about a future risk. It is a map of a present one. Every institution that has deployed AI in credit, fraud, identity, or liquidity functions without completing a seven-vector threat surface assessment, a third-party AI concentration risk inventory, an independent adversarial red-team exercise, and a board-level AI governance mandate with named personal accountability is not managing a future liability; it is carrying a current one. The board member who reads this analysis and commissions a review is making one kind of institutional choice. The board member who reads it and commissions a communication exercise is making another. The distinction between those two choices is the distinction between governance and performance, and in the context of AI risk, performance without governance has a documented and expensive failure mode.

Examine your institution against these questions, not in a risk committee, but in your own honest strategic reasoning, before the next scheduled board meeting puts the opportunity to reason about them in advance out of reach:

• Can your board name, with operational specificity and without deferring to a technology committee, all seven categories of AI risk to which your institution is currently exposed, and state with equal precision which of them is governed by a framework adequate to its systemic consequence and which is not?

• Has your institution conducted an independent adversarial red-team exercise against its AI systems in the past twelve months, and has the result been reported directly to the board rather than filtered through the technology function that commissioned the AI deployment and whose incentive structure rewards deployment velocity over governance rigour?

• Does your institution know, precisely and currently, which AI systems are supplied by vendors also supplying other institutions in the South African sector, and has it modelled, with scenario specificity, the systemic consequence of the most widely shared vendor experiencing a catastrophic failure or regulatory suspension simultaneously across all client institutions?

• Are your liquidity stress scenarios and depositor crisis response protocols calibrated to the speed at which AI-mediated social networks can co-ordinate and amplify a bank-run signal in the specific communications environment of South Africa's digitally connected retail depositor base, or are they calibrated to historical bank-run precedents that predate the AI-mediated information environment?

• Is your engagement with the South African Reserve Bank and the Financial Sector Conduct Authority on AI governance proactive, documented, substantive, and driven by your own AI risk assessment, or is it reactive, compliance-oriented, and driven by the regulatory calendar rather than by the actual accumulation of ungoverned risk?

If any of these questions cannot be answered with clarity and conviction, the threat surface is not merely unseen; it is ungoverned. The institution that completes this governance work before the shock becomes the sector's standard. The institution that defers it becomes the sector's lesson. The choice is present. The moment for its exercise is now.

Images by Bandile Ndzishe of Bandzishe Group

About bandile ndzishe

Bandile Ndzishe is the CEO, Founder, and Global Consulting CMO of Bandzishe Group, a premier global consulting firm distinguished for pioneering strategic marketing innovations and driving transformative market solutions worldwide. He holds three business administration degrees: an MBA, a Bachelor of Science in Business Administration, and an Associate of Science in Business Administration.

With over 30 years of hands-on expertise in marketing strategy, Bandile is recognised as a leading authority across the trifecta of Strategic Marketing, Daily Marketing Management, and Digital Marketing. He is also recognised as a prolific growth driver and a seasoned CMO-level marketer.

Bandile has earned a strong reputation for delivering strategic marketing and management services that guarantee measurable business results. His proven ability to drive growth and consistently achieve impactful outcomes has established him as a well-respected figure in the industry.

As an AI-empowered and an AI-powered marketer, I bring two distinct strengths to the table: empowered by AI to achieve my marketing goals more effectively, whilst leveraging AI as a tool to enhance my marketing efforts to deliver the desired growth results. My professional focus resides at the nexus of artificial intelligence and strategic marketing, where I explore the profound and enduring synergy between algorithmic intelligence and market engagement.

Rather than pursuing ephemeral trends, I examine the fundamental tenets of cognitive augmentation within marketing paradigms. I analyse how AI's capacity for predictive analytics, bespoke personalisation, and autonomous optimisation precipitates a transformative evolution in consumer interaction and brand stewardship. By extension, I seek to comprehend the strategic applications of artificial intelligence in empowering human capability and fostering innovation for sustainable societal advancement.

In essence, I explore how AI augments human decision-making and strategic problem-solving in both marketing and other domains of life. This is not merely an interest in technological novelty, but a rigorous investigation into the strategic implications of AI's integration into the contemporary principles of marketing practice and its potential to reshape decision-making frameworks, rearchitect strategic problem-solving paradigms, enhance strategic foresight, and influence outcomes in diverse areas beyond the marketing sphere.